IT infrastructure requirements for trending applications differ significantly from those needed to support traditional applications. Application developers are re-architecting their solutions to move from monolithic architectures to microservices-based architecture. These applications, also known as Cloud-native applications, require a complete redesign of provisioning, deploying and managing strategies.

This article focusses on leveraging micro-services architecture for upcoming applications, challenging requirements they pose in their provisioning/deployment/management and how latest trends in virtualization and cloud-computing space can help in resolving these requirements.

Traditional enterprise applications followed a monolithic architecture complete software ran in the context of one application. This architecture was simple to deploy and manage – resolve the dependencies, install the software and the application was ready to use. At runtime, in case the application needs to scale, a new instance of the complete application was instantiated, with a load balancer managing load on multiple instances.

This architecture, though simple, soon posed multiple challenges. It requires estimating peak load and ensuring the availability of underlying infrastructure to handle this load, this led to over-provisioning of infrastructure. Scaling conditions led to scaling of all components of the application, though the actual use case might need scaling a sub-set of these components. Scaling different components might require different resource requirements. Upgrade to one component requires rebuilding & redeploying the complete application. If one component fails, the whole application fails.

These shortcomings encouraged the transition of enterprise applications to a new architecture micro-services based architecture where each component is designed as a separate service fully independent and self-contained. Thus, each component could be independently instantiated, scaled and upgraded. This architectural transformation also encouraged developing these micro-services with no affinity towards any operating system or hardware. They are generally deployed in a virtualized and highly elastic cloud environment aptly naming these applications as cloud-native applications. These applications run in a distributed manner, some components run in customer premises, some run in the edge and some in the cloud.

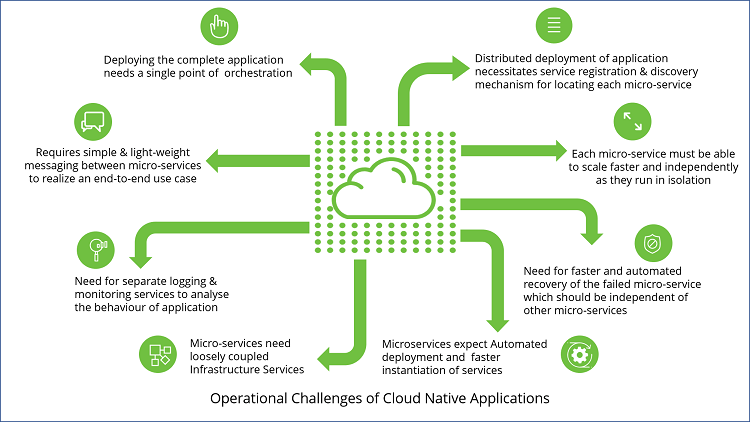

The transformation these cloud-native applications offer will bring unprecedented service agility and resiliency to the applications. But it also adds a high degree of complexity to their deployment and operations:

Advances in virtualization and cloud computing technologies are working well to resolve some of the challenges of cloud-native applications discussed in the previous section. This section discusses some of these technological advancements and how they help out in the optimal deployment of cloud-native applications.

Most of these cloud-native applications are bundled as independent lightweight containers. Virtual machines took longer to start since they were packaged with the entire OS and the application. On the other hand, containers just have the application and some dependencies. Thus, containers are lightweight and faster to start and scale up/down. Using namespaces and cgroups, containers are able to offer the desired isolation context needed for micro-services. All these features make containers a perfect fit to run individual micro-services of the cloud-native applications. Docker containers are quite a popular choice for hosting micro-services.

Once the container images with individual micro-services exist, next comes the need to orchestrate these containers. The orchestration solution needs to cater to a mix of physical/virtual serves or private/public cloud. Kubernetes is the most popular container orchestration solution offering automated deployments, scaling and fault management of these containerized applications. Kubernetes can run seamlessly with both private cloud deployments (based on OpenStack, OpenShift, etc.) or public cloud deployments (like Azure, AWS, etc.). Kubernetes offers controllers which constantly monitor the current state of the application (each micro-service) and compare it with the desired state detecting a failure or need to scale up/down a particular micro-service. Thus, Kubernetes offers auto-healing and auto-scaling of each component of the application.

Applications handling deep learning and data-intensive workloads require a high level of computing power. GPUs play a very crucial role in achieving such a rapid rate of computation. Dockers didn’t support access to GPUs natively. To execute a container that uses GPUs, there are open source solutions (e.g. Nvidia-Docker)which provide the containers with the necessary dependencies to access the GPU. Thus, the underlying GPU infrastructure gets exposed to the containers. These solutions have been integrated with Kubernetes – where deep learning algorithms running in Kubernetes pods can access the GPUs as per their needs.

Cloud-native applications focus only on how they are deployed, where they are deployed is not relevant. This requires modeling the deployment so that the service/application is portable to any cloud and is infrastructure agnostic. Industry group, Organization for the Advancement of Structured Information Standards (OASIS) has introduced Topology and Orchestration Specification for Cloud Applications (TOSCA) as the standard modeling language delivering a declarative description of an application. It includes all the components of the application the software components, the networks, the subnets, the resource requirements, scaling and load balancing policies. A descriptor file defined in TOSCA can be deployed by an orchestration engine, in an automated manner on any kind of cloud environment.

With Kubernetes becoming the de-facto choice for cloud-native application deployment, Helm, the package manager for Kubernetes, is also picking up pace. With a single click, Helm can fetch software from the repositories, install software dependencies, install software and configure the software. To design a fully automated and portable cloud-native deployment, both Helm and TOSCA are leveraged. While TOSCA offers high-level modeling, helm offers the low-level building blocks of the software.

The basic principle behind cloud-native applications is that each micro-service runs in the context of an individual container. Though these micro-services are self-contained and independent, they still need to share information/messages among each other as part of application execution as a whole. One micro-service might publish an event and other micro-services might be listening to these events.

For synchronous messaging among micro-services, REpresentational State Transfer (REST) based lightweight APIs are used. They offer low overhead and low network latency. Google remote procedure call (gRPC) has also been picking up fast due to the high-performance gains it offers. JSON or XML based messaging can be replaced by binary representation formats such as ProtoBuf or Avro.

For asynchronous messaging, a standard store-and-forward pattern can work. RabbitMQ is one of the most widely used open-source message broker offering such messaging. Kafka is another alternative to support such publish-subscribe asynchronous messaging between the microservices. Kafka also offers to store the data streams thus making the application fault-tolerant.

The distributed architecture of cloud-native application relies on centralized logging tools to get logs from different micro-services and analyze them as a whole. Fluentd is an open-source data collector which offers unified logging to spread out data sources. The logs can then be analyzed using open-source monitoring/analyzing tools like ElasticSearch and Kibana. Where Fluentd can collect data in terms of events thus unifying the logging infrastructure, another open-source tool Prometheus offers service/application monitoring in terms of metrics it offers multi-dimensional data collection and querying option which is very useful for cloud-native applications.

Cloud-native applications need service discovery/registry mechanism to discover the location of a particular microservice at runtime. For service registry, etc works as a database of available service instances. And open-source tools like “CoreDNS” can be leveraged for service discovery. CoreDNS can be integrated directly with Kubernetes to detect the location of a micro-service or can interact with etcd.

The emerging networking applications in IoT and edge computing expect microservices to run anywhere in the network – some might be running in private clouds of different service providers, some might be running in on-premise private cloud deployments, and some might be in the public cloud. So, cloud-native applications must support end-to-end orchestration around hybrid clouds and multi-cloud deployments. Emerging open-source orchestration solutions (used to automate, design, orchestrate and manage services), like Open Networking Automation Platform (ONAP) and OSM (Open Source Mano), already support deployments in a multi-cloud or Multi-VIM environment. They offer a single dashboard to design and manage services spread across multiple deployments sites in a network. ONAP/OSM is now working aggressively towards enhancing their solutions for deployment on multiple infrastructure environments, for example, OpenStack and its different distributions (e.g. vanilla OpenStack, Wind River, etc…), public and private clouds (e.g. VMware, Azure).

Enterprise applications are vigorously shifting towards micro-services-based architecture. Service agility, scalability, and cloud-agnostic deployments are the key pillars of these applications. Applications will be deployed as a set of microservices, packaged in individual containers and will be dynamically orchestrated. Enterprises will encourage a vendor-neutral ecosystem wherein such cloud-native applications can be built and deployed using open-source technologies. Cloud-Native Computing Foundation (CNCF) is an open-source software foundation offering many open source projects (Kubernetes, Prometheus, CoreDNS, etc.), dedicated to making cloud-native computing a success!